This is the first of a series of short tutorials on how I have coded the MeshLab filters for Obscurance and Shape Diameter Function. I will also give some more details on the two functions.

The first thing to point out is that the core calculation for both filters is GPU based. Indeed everything relies on a depth peeling engine. But first let's see what obscurance and SDF are.

Shape Diameter Funtion

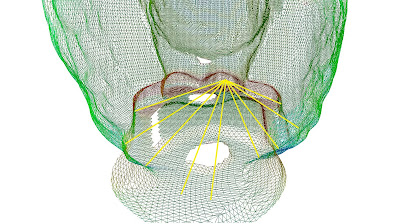

As I wrote in a recent post, SDF is a scalar function defined on the mesh surface. It expresses a measure of the neighborhood diameter of the object at each point on the surface on the mesh surface. You can think about SDF as reasonable measure of the 'thickness' of an object. Given a point on the mesh surface, several rays are sent inside a cone, centered around the point's inward-normal, to the other side of the mesh (see the image below). The result is a weighted sum of all rays lenghts.

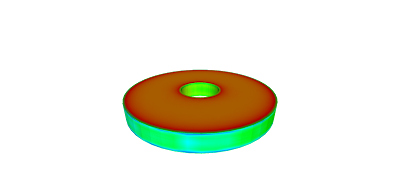

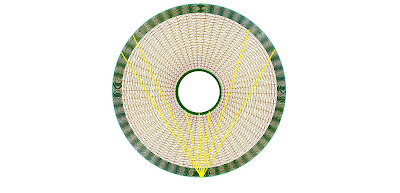

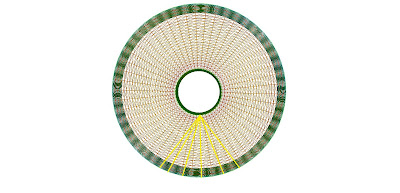

If you are familiar with statistics, you may know that such method can be affected by the presesence of outliers, that are very long or very short rays that differ very much from all other rays. These rays should be ignored before computing the weighted sum. You can do it calculating the median of all rays length and removing values below or above a certain percentile. Actually some shapes are more affected by this problem. Watch this figure:

You can see (if you are blind, like me, click on the image to zoom out) that there is something strange with this mesh. I would expect to have the same color for both the internal and external of the model, but on the extern there are some blue blurs (that mean larger parts). Watch the two figures below:

You can see (if you are blind, like me, click on the image to zoom out) that there is something strange with this mesh. I would expect to have the same color for both the internal and external of the model, but on the extern there are some blue blurs (that mean larger parts). Watch the two figures below:

As you can see, in the second case rays are very similar as they have no chance "to escape" to very distant locations.

Outliers Removal

Unfortunately, doing outliers removal using the GPU is a hard task. You should trace each ray and save all intermediate result and then sort to find out the median. This sound even worse, if you notice that, in GPU computing, you are forced to trace in parallel the same ray for all vertices. For large mesh the ammount of intermediate result to be saved would be impratical. The solution (suggested by my teacher, prof.Cignoni ) is to use a local outliers removal. Infact, the removal is made on the fly with a supersampling of the depth buffer. For each ray that we trace, we take multiple depth values near the point of intersection and we output only the median of these values. You may argue that I should have raytraced the depth buffer instead than just sampling it. Indeed, it would be more correct, but extremely slow, I tried a raytrace function like the one you use in parallax occlusion mapping. If you have a better idea (maybe a clever multi-pass approach) let me know.

False intersections

An other detail that add robustness to our SDF function is the false intersection removal. For each each ray we check the normal at the point of intersection and ignore intersections where the normal at intersection point is in the same direction as that of the point-of-origin. The "same direction" is actually defined as an angle difference less than 90°. In a nutshell, we want the point of intersection to approximately face the point of origin of the ray.

More strict measure

What if you are interested in a more strict measure of the mesh? That is you would like the antipodal distance at eache point on the mesh. In this case, you can simply reduce the cone angle to very low values like 20° or maybe 10° degrees. Smaller values are not suggested, as the exact antipodal distance is not well defined for shapes approximated by a triangular mesh. Moreover you should strongly increase the number of rays to be traced. This is due to the fact that, in a GPU implementation, is necessary to sample (uniformly, in this case) the sphere around the mesh and reject directions that don't fall within the cone around the vertex normal. Than, if you use a very small cone, there is chance that no directions fall within this cone.

Nessun commento:

Posta un commento